There’s a pattern in how financial services adopt new technology. A capability gets hyped for a couple of years, compliance teams stay cautious, and then something shifts – a big enough efficiency gap opens up, or a regulator says something pointed enough – and adoption accelerates fast. Agentic AI looks like it’s hitting that inflection point right now.

It’s different from what came before. Not just smarter automation – something that actually reasons, plans, and executes across multi-step workflows without being told what to do at each turn. For KYC specifically, where the manual workload has been growing for years without a proportionate improvement in outcomes, the implications are significant.

What Makes AI “Agentic” – and Why It Matters

The term gets used loosely, so it’s worth being specific. Agentic AI refers to systems that can set sub-goals, take sequences of actions, and adapt based on what they find – operating autonomously toward an objective rather than waiting for instructions at each step.

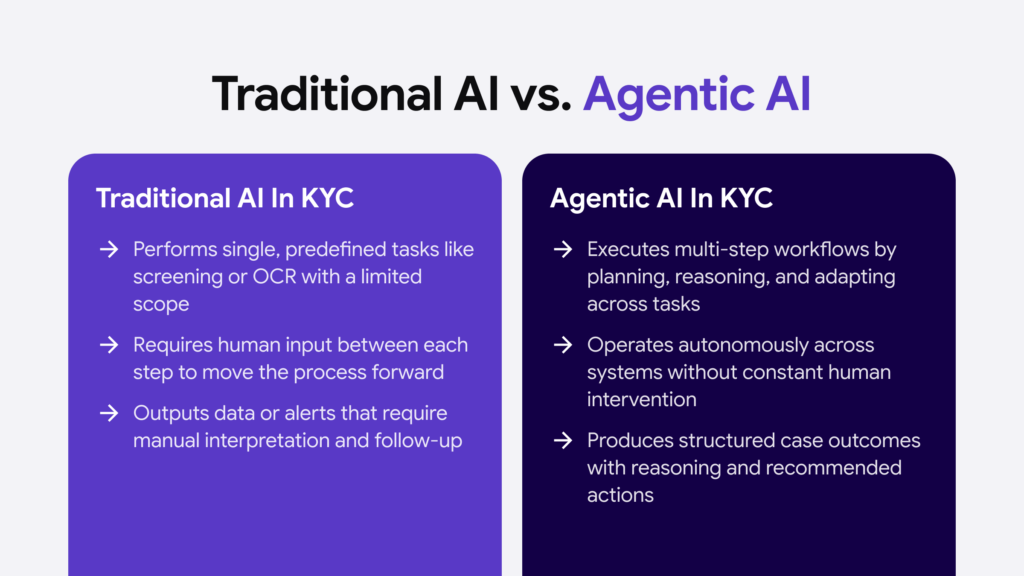

That’s different from the AI most compliance teams are already familiar with. Screening tools that flag sanctions matches, OCR that extracts text from ID documents, models that assign risk scores – these are useful, but they’re reactive and narrowly scoped. They do one thing and hand the result to a human.

An agentic system works differently. Give it a KYC case, and it can pull the customer’s documents, run them through validation checks, cross-reference the individual against PEP and sanctions databases, search for adverse media, assess the source of wealth, flag inconsistencies for review, and generate a structured case summary – without a compliance analyst manually moving between systems at each stage.

The UK government defines agentic AI as systems that “can behave and interact autonomously in order to achieve their objectives.” In a KYC context, the objective is to achieve a complete, accurate, and defensible customer risk assessment.

Automate your KYC process

iDenfy verifies customers from 200+ countries in seconds. AI-powered, compliant, and trusted by 1,000+ companies.

Explore KYC SolutionThe Issue It Is Solving

The financial industry detects roughly 2% of global financial crime flows, according to Interpol, despite compliance spending increasing by up to 10% a year in major markets for most of the past decade. That gap is partly a regulatory complexity problem and partly a capacity problem. Manual KYC processes are slow, inconsistent, and expensive to scale.

False positive rates in AML transaction monitoring sit between 90 and 95% at many institutions, which means compliance teams spend the vast majority of their time clearing alerts that lead nowhere, leaving less bandwidth for the ones that matter.

This is not a criticism of the people doing the work. It is a structural problem with how the work is designed. Rule-based systems do not adapt, static processes do not scale, and the volume of regulatory requirements is not going down.

What agentic AI changes is the ratio between human effort and meaningful output. A large Dutch financial institution achieved a 90% reduction in onboarding time and cut staff workload by 30% using AI innovations for KYC and compliance processes.

JPMorgan’s AI-powered KYC engine has increased productivity by up to 90% on tasks like document verification, sanctions checks, and risk tier assignment. These are not projections – they are live results from institutions that started building this infrastructure early.

Where Agentic AI Slots Into a KYC Workflow

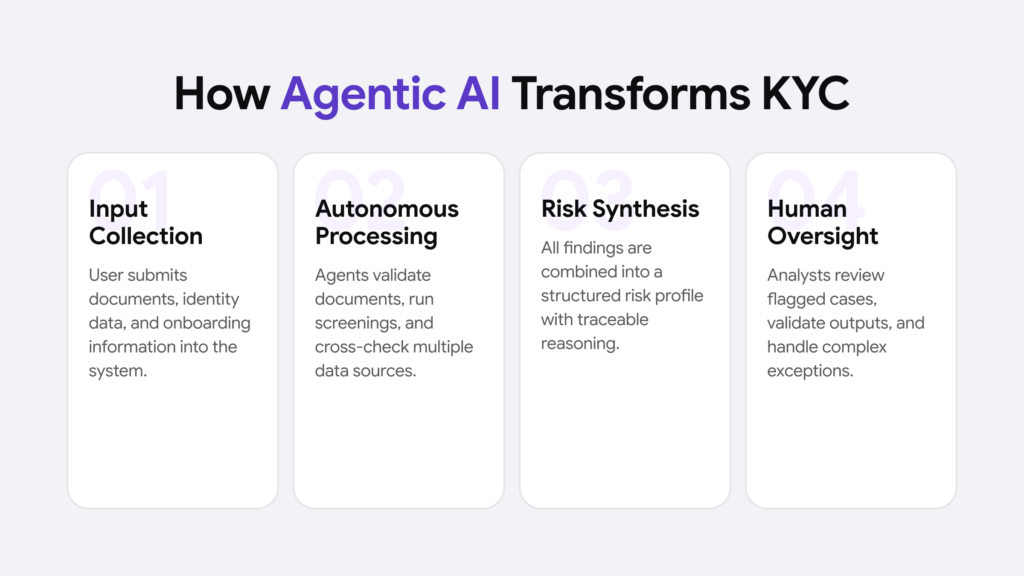

The clearest way to understand what changes is to walk through what a KYC case actually involves, and where agents fit in.

At onboarding, a user submits their documents. Today, a compliance analyst typically checks document validity, runs the name through screening databases, reviews any hits, assesses the risk profile, and decides whether the case needs enhanced due diligence.

Each of those steps might involve a different system, a different login, and a different output format. The analyst is the connective tissue – manually passing information between tools.

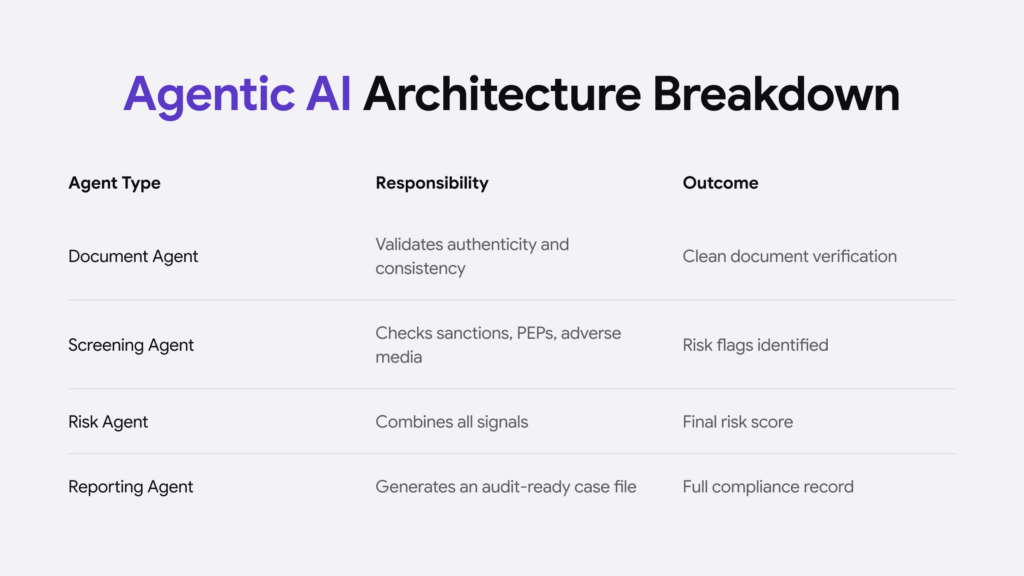

An agentic architecture removes that bottleneck. A root agent orchestrates a set of specialist sub-agents, each responsible for a specific task:

- Document verification – checking authenticity, consistency across documents, expiry dates, and format validity

- Identity screening – running names and associated entities against sanctions lists, PEP registries, and adverse media sources

- Source of wealth assessment – reviewing financial documents and cross-referencing declared income against external signals

- Risk scoring – synthesizing all of the above into a structured risk profile with traceable reasoning

- Case documentation – generating the compliance record, including the evidence chain behind each decision

The analyst’s job shifts from executing those steps to reviewing the output, handling exceptions, and making final calls on the cases the system flags as genuinely complex. Some companies’ experience suggests manual intervention should be reserved for the highest complexity exceptions – typically less than 15 to 20% of the total – with human experts focused on validation and coaching the AI agent workforce.

Continuous Monitoring

Onboarding is the obvious starting point, but agentic AI’s impact on ongoing monitoring is arguably more significant.

Periodic reviews – the annual or triennial refresh cycle most institutions still run – exist largely because continuous monitoring at scale was operationally impossible. You can not have compliance analysts re-reviewing every user every time something changes in the world.

Agents can do this. KYC verification no longer has to be a point-in-time event. Agents can watch for triggering events – a user appearing on a new sanctions list, a change in company ownership structure, adverse media coverage, unusual transaction patterns – and initiate a review the moment one occurs rather than waiting for the next scheduled cycle.

Corporate users’ information can be updated automatically by connecting directly to company registries, pulling the latest documentation, and extracting UBO information without anyone having to manually check.

This is the connection to perpetual KYC. The technology that makes continuous monitoring practically achievable at scale is, essentially, agentic AI applied to the issue of monitoring.

The Governance Questions Nobody Has Fully Answered Yet

It would be selective to talk about agentic AI in KYC without addressing the governance issues, because they’re real and the industry hasn’t yet sorted them out.

Only 2% of companies had adequate AI guardrails in place in 2025, according to Infosys, and 95% of respondents had experienced at least one AI incident, with 77% of those incidents resulting in financial losses. The risks specific to agentic KYC systems include:

- Model hallucination – an agent citing a sanctions match that does not exist, or generating a risk assessment based on fabricated reasoning

- Data poisoning – manipulating training data to make an agent systematically overlook certain risk signals

- Model drift – agents performing well against known patterns and degrading gradually against new ones without anyone noticing

- Accountability gaps – when an agent makes a compliance decision that turns out to be wrong, who owns it?

The OCC expanded its examination scope in late 2025 to include AI model risk management, which signals where regulatory expectations are heading. The EU AI Act classifies certain high-risk AI applications in financial services, and while it does not address agentic systems specifically, the trajectory is clear: regulators want explainability, audit trails, and human oversight mechanisms built in from the start – not retrofitted after deployment.

The institutions doing this well are treating the human-in-the-loop not as a workaround for AI limitations, but as a deliberate design choice. Agents handle the volume. Humans make the calls that require judgment, and they review enough of the agent’s work on a sample basis to catch drift before it becomes a compliance problem.

What “Ready” For Agentic AI Looks Like

The institutions that are deploying agentic KYC well share a few things in common. They did not start by buying a platform and hoping for integration. They started with a clean picture of their existing process – the specific steps, the handoffs, the exception types – and designed agent workflows around that.

They also did not try to automate everything at once. The sensible approach is to start where the volume is highest, and the decisions are most straightforward, build confidence in the output quality, and extend automation incrementally to more complex case types.

Leading banks prioritize a scalable and modular structure with access to foundation models, an enterprise agentic framework, and granular process flows broken down into distinct, independent capabilities so that agents can be trained and optimized effectively.

Data infrastructure matters too – probably more than the AI itself. Agents are only as good as the information they can access. Fragmented customer records, inconsistent data quality across business lines, limited integration between internal systems and external data sources: all of these constrain what an agentic system can actually do in practice.

Conclusion

By 2026, 70% of new account onboarding is projected to be fully automated. Whether that figure holds up or not, the direction is obvious. The compliance function five years from now will look structurally different.

The shift is not about replacing compliance expertise. A well-designed agentic system still needs people who understand financial crime well enough to define what the agents should look for, catch what the models miss, and own the decisions that matter.