From a fake video of Ukraine’s president Zelensky, to the viral “Tom Cruise” TikTok sensation — deepfakes have stormed the Internet, and yet, many of their use cases aren’t designed for the better good. The synthetic media created by AI merges a sense of reality with events or characters that didn’t actually happen.

By planting its roots in 2017 on Reddit, the term “deepfake” now is linked not only to innocent videos and swapping faces, it’s also associated with misinformation, propaganda, porn and cyberattacks.

But are deepfakes really that bad? We look into modern AI, deepfakes’ origins, use cases, and risks to businesses and society as a whole.

What is a Deepfake?

A deepfake is a manipulated image, video, or audio recording designed to produce realistic media of people who actually didn’t say any of the things or never actually existed at all. It’s a form of media that is created by sophisticated deep learning algorithms and artificial intelligence (AI).

A common example of a deepfake is a fake video of a celebrity, a politician, an influencer, or another prominent figure that can potentially show shock value due to the content of the video. That’s why, oftentimes, deepfakes can damage reputation, cause distress and spread misinformation.

A sophisticated deepfake can trick people into thinking that it’s real. In general, deepfakes can be used in different ways and different industries, including sectors like entertainment and cybersecurity. Criminals also use this technology for deceptive practices. They collect information from emails, magazines, as well as social media posts to produce deepfakes. Sometimes, fake images and real sounds are modified and produced into deepfakes to appear more realistic to the general public.

See why 1,000+ companies chose iDenfy

Compare features, pricing, and support — then try iDenfy free with no commitment.

Book a Free DemoWhat is Deepfake Technology?

Deepfake technology is another term for deepfakes and a form of AI that uses deep learning and machine learning algorithms that are able to learn from vast datasets.

The two algorithms — generator and discriminator — work like this when creating a deepfake image:

- The first algorithm generates a realistic replica of an image.

- The second algorithm detects if the replica is fake and identifies the differences between it and the original.

The first algorithm then adjusts the synthetic content based on feedback from the second algorithm. It continues to perfect it until the second algorithm isn’t able to detect any false elements. This process is repeated as many times as needed.

Consequently, deepfake technology learns from a lot of examples, quickly analyzing facial features, people’s expressions, details of human speech and behavior, and more. Simulating such details with high accuracy would be a complex task for people in real life.

The term “deepfake” survived in the media in 2017 after a Reddit moderator coined this title and created a subreddit called “deepfakes”. It mainly included videos of celebrities that were created using face-swapping technology, then evolved into bigger hoaxes that featured pornographic content.

Related: Artificial Intelligence (AI) and Identity Theft — What it is and Why it Matters

Reasons Deepfakes Can Be a Problem

As deepfakes become mainstream, they have stormed the media due to some side effects around fraud and security, especially when AI alterations are used for the bad, and not the good.

Examples of cases why some are concerned about deepfakes include:

- Disinformation risks. When used improperly, deepfakes can spread false information in the media or social networks, making it hard to detect which parts are real and which are fake.

- Loss of trust. Due to the realistic misuses of deepfakes, some people can lose interest and general trust in proper information sources, like the news and different official statements.

- Ethical considerations. Non-consensual deepfake alterations, revenge videos, and other adult-oriented content that can harm minors online and cause serious emotional damage raise some questions about privacy/consent and overall security on the internet.

If you see a deepfake, it doesn’t mean you need to automatically assume that it’s bad. There are many creative opportunities and ways to entertain the public using deepfake technology, especially due to the technical abilities like visual effects it can bring.

What is a Deepfake Attack?

A deepfake attack is a deepfake used with bad intentions to harm. It’s also known as a form of cyberattack, often targeted at businesses and other individuals with the intent to deceive. This cyber-enabled deception uses AI-generated audio, video, text, or images to impersonate a real person.

Deepfake attacks are obviously an unwanted guest for businesses. Mainly because they are linked to issues like:

- Credential harvesting. This results in tricking employees into sharing confidential data, including their credentials.

- Business email compromise (BEC). This results in phishing emails that are sent on purpose, using a realistic video or voice impersonation.

For example, in cybersecurity, a deepfake attack can harm human trust and be used as a channel to bypass traditional security controls. This makes it harder to detect such attacks, especially in urgent or high-stakes situations, which aren’t backed by proper security controls.

How are Deepfake Videos Created?

People create deepfake videos by training the AI model with real audio data from a specific person in order to replicate their voice. In this technique, the target in the existing video is analyzed and algorithms capture attributes like facial expressions and body language, then apply these features to a new video.

Another way to generate deepfake videos is to overdub existing videos and add newly generated fake sound that mimics the person’s voice. Similarly, for video games in particular, deepfakes can be created by mimicking a person’s voice based on their vocal patterns and enabling the voice to say anything the creator chooses.

Lastly, a popular method for deepfakes is lip-syncing. Here, the goal is to match a voice recording to a video and make it seem like the person in the video is actually saying the recorded words. However, the audio for the video can also be a deepfake, which can be even more confusing.

Famous Positive Deepfake Examples

Deepfakes are often associated with malicious examples, such as revenge porn, and, now, in the intense geopolitical environment, shift the narrative regarding important political or economic issues. However, a deepfake can be positive as well. For example, David Beckham was featured in an official “Malaria Must Die” campaign that raised awareness about malaria. It was a video of the football star that used deepfake technology to show him speaking in nine different languages.

Another beneficial use of deepfakes is AI-generated videos of news reporters. Reuters uses such deepfake videos showcasing virtual reporters and their personalized broadcasts, as well as for sports news summaries. Like with the mentioned Beckham campaign, news sites use dubbing in various languages to make the news more accessible.

Deepfakes are also popular for creating or enhancing existing art pieces. They are used in the film industry or for music. Another great example is the “Dalí Lives” exhibition at the Dalí Museum in Barcelona. The technology is used to create a realistic portrait of the famous artist, mimicking his voice and enabling him to speak to the museum’s visitors.

Why are Deepfakes Risky?

Bad actors use deepfakes to fulfill their malicious intentions, which vary depending on the use case. For example, people create deepfakes to:

- Bypass identity verification systems. Criminals create fake AI videos to complete biometric and liveness checks and try to open new bank accounts or use voice samples from a victim’s social media page to collect personal data and take over accounts.

- Harass or blackmail another person. This is a common technique for deepfakes to be misused for explicit content, for example, by stacking a person’s face on a pornographic video piece. This can be a form of blackmail used to damage another person’s reputation.

- Steal sensitive information. Deepfakes can be used as a tool of impersonation to steal another person’s credentials or credit card info. This technique is successfully employed when impersonating company executives in order to access personal information, posing a serious threat to the whole organization.

In general, deepfakes are risky because they are often closely tied to all sorts of scams. A good example is the social engineering attack that featured deepfake audio and fake emails in an attempt to scam a company in the United Arab Emirates. This corporate heist resulted in $35 million in losses after successfully impersonating the company’s director and receiving transfers from employees.

Similarly, a deepfake video was used to impersonate Sam Bankman-Fried back in 2022. An X user posted it, in which the FTX founder claimed to offer free crypto to all users who were affected by FTX’s collapse.

Related: How to Check if Someone Is Using Your Identity? Identity Theft Prevention Guide

How Realistic are Deepfakes Going to Get?

Even though deepfake detection methods improve, deepfake creators continue to find new ways on how to create even more convincing results. For example, early deepfakes didn’t have people who blinked realistically. Now, such AI-powered videos have subjects that talk, blink, move and look like real people without or with minimal flaws.

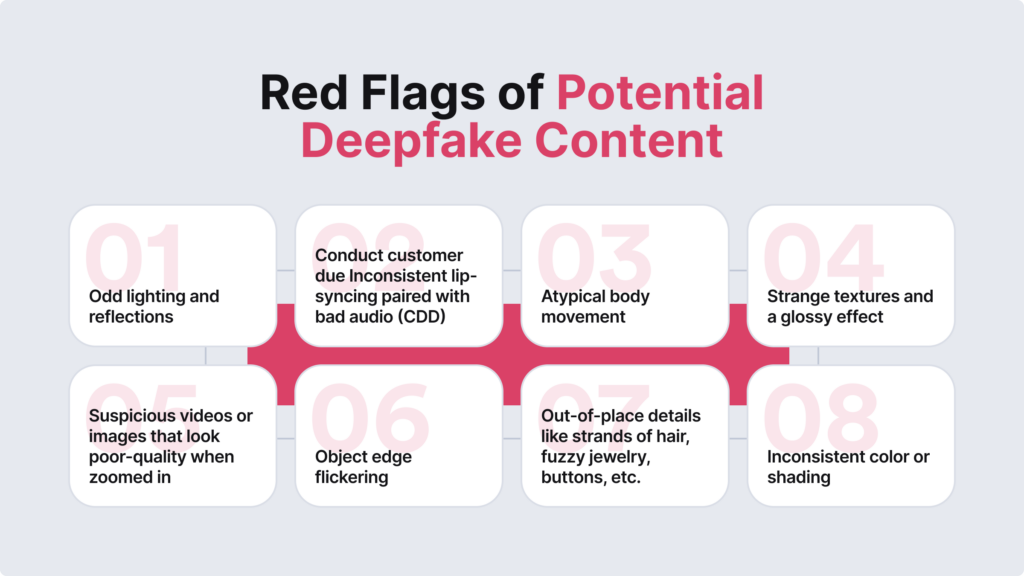

Specialists say that deepfakes are more likely to get even better and become harder to spot, and that’s why we have to be prepared. Research groups and multiple universities are addressing these challenges by conducting research and spreading information, helping people to recognise the threat of deepfakes, real-life examples and red flags that point to potential negative effects of deepfakes and how they can be misused for fraud or harming others online.

Can Artificial Intelligence Detect Deepfakes?

AI can identify deepfake content. That’s why many security specialists agree that you have to fight AI with AI itself. This is especially important now that the deepfakes have become more sophisticated and distinguishing anomalies have become more challenging. AI can be trained to spot these manipulations and detect unnatural human expressions or voices.

There are certain strategies for using artificial intelligence to detect deepfakes. For example, a common practice of this sort is implementing a two-stage process — capture and analysis. This involves recording images or videos of the physical world and then checking the captured content by assessing its key details for authenticity, such as objects or facial features.

However, there are recent studies on valuable uses of standard LLMs like ChatGPT, specifically regarding deepfake detection. Research from the University at Buffalo shows that tools and systems similar to ChatGPT can identify signs of manipulated images. However, they still perform much worse than specialized deepfake detection algorithms, mainly because they are not designed for deepfake detection. However, this can be beneficial for less tech-savvy folks who use AI to chat and receive simple explanations. In this scenario, this would be asking ChatGPT to explain why something looks fake (for example, for spotting visual inconsistencies like lighting errors).

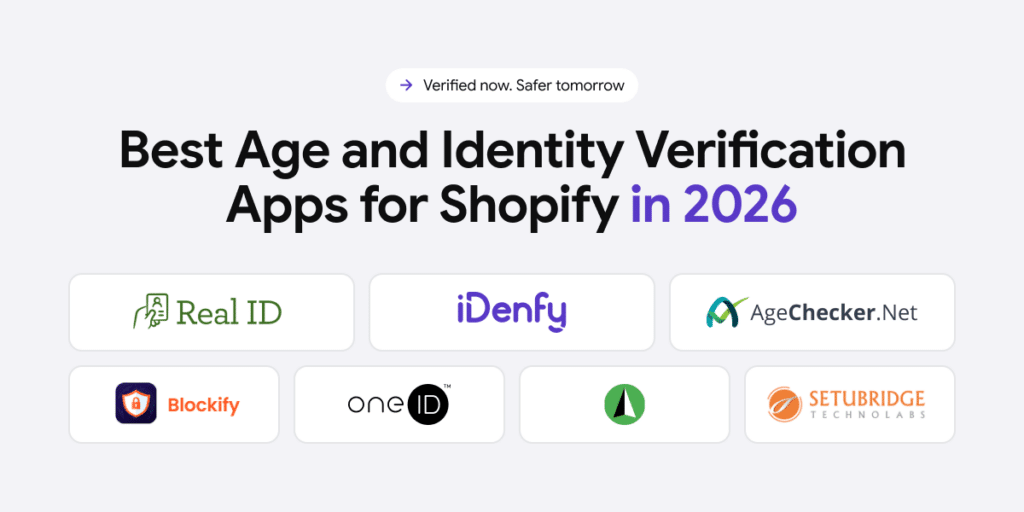

On a more practical level, specifically linked to identity verification and user onboarding, many regulated industries are obliged to verify their users during the registration process and also face the issue of fraudulent deepfakes. Different from standard AI models, these onboarding software solutions are specifically designed to spot fraud. For this reason, even less strict sectors now use robust ID verification measures as a first line of defense to detect both forged ID documents and other attempts, like deepfakes, during the selfie verification stage, as an attempt to bypass Know Your Customer (KYC) checks.

Related: Top 5 Identity Verification Measures [For Beginners]

Examples of Deepfakes Used During Identity Verification

A particularly important evolution for identity verification teams is the emergence of real-time deepfakes. This is where the buzzword of “generative AI” comes into play.

For example:

- An AI-generated video of a fraudster’s chosen identity can be injected directly into a live video stream during an onboarding or KYC session, rather than being pre-recorded, as we knew traditionally.

- Unlike old-school deepfakes that are created in advance and played back on a screen, feeding fabricated media directly into the video pipeline before it reaches the verification system.

This means passive detection methods that look for screen-replay artifacts (reflections, moiré patterns, lighting inconsistencies) can be less effective than ID verification software that is backed by active liveness detection technology. At iDenfy, we offer both options, depending on your risk management requirements.

iDenfy’s Deepfake Detection Solution

At iDenfy, we offer an AI-powered, fully automated verification solution that accurately detects deepfakes and other fraudulent behavior, allowing you to catch fraudsters right before they actually access your network by:

- Comparing selfies to document photos.

- Automatically determining the user’s risk score.

- Assessing other behavioral signals, like IP addresses.

- Adding extra steps for high-risk users, such as watchlist screening or adverse media screening.

Unlock your free trial here.